Why the best innovations often hide in the most boring features

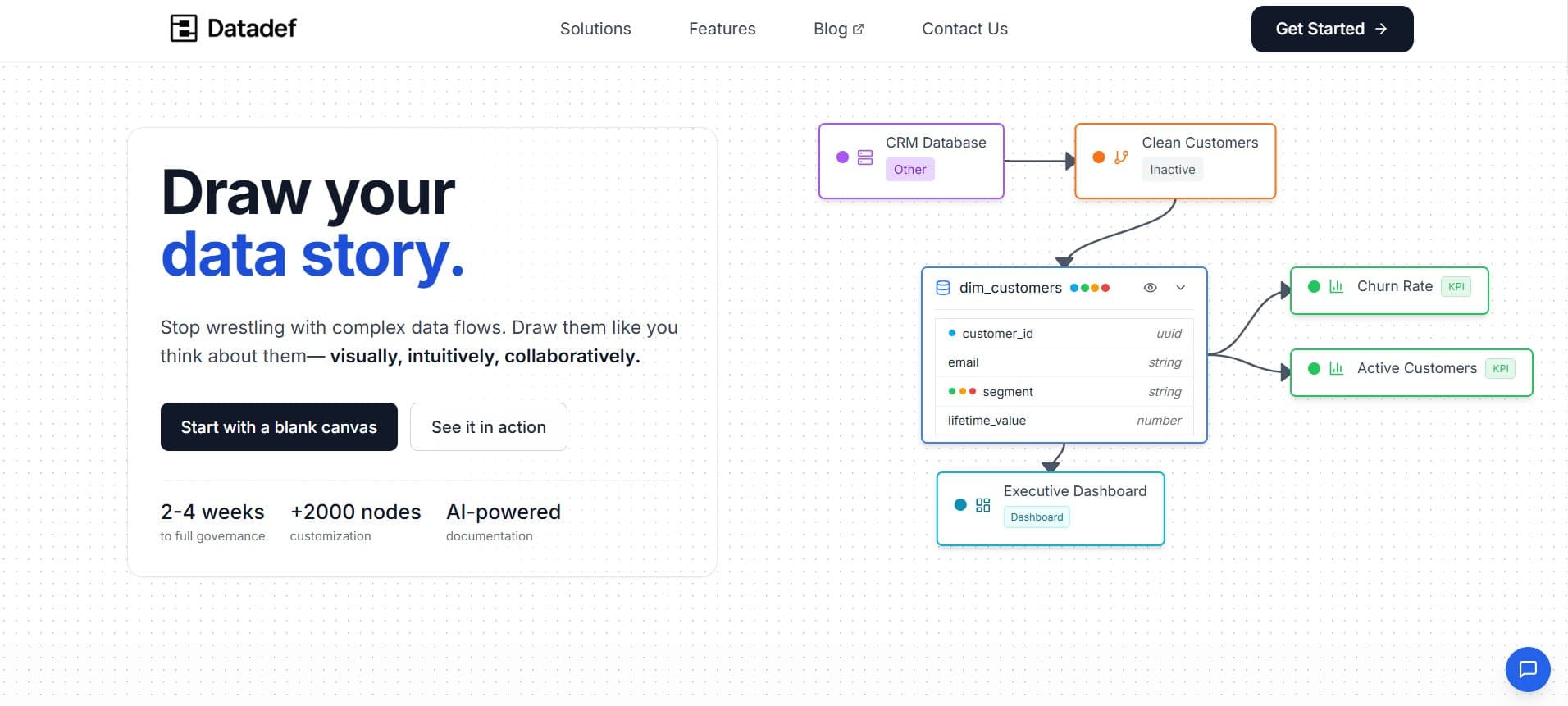

I’m a senior IT and data consultant with a passion for building tools that make engineers’ lives easier. After years of seeing data teams struggle with documentation and lineage, I decided to create Datadef to simplify the process. I love turning real-world pain points into practical solutions that save time and reduce frustration

I've been building software for long enough to know that the features you think will change everything usually don't, and the ones that actually do change everything are the ones you barely thought about when you shipped them. Case in point: Datadef's JSON export feature.

Six months ago, if you'd told me that a mundane "Export Project" button would become the cornerstone of an entirely new way of thinking about data modeling, I would have laughed. Today, I'm watching myself spin up complex data lineage diagrams in under 30 seconds, and I'm still a little stunned by how we got here.

A geometry problem

Let me start with a fundamental truth about AI that took me way too long to internalize: AI doesn't see the world the way we do.

When you're building a visual tool like Datadef, where users drag nodes around a canvas, connect them with edges, and build these beautiful, sprawling maps of their data architecture, you naturally think in terms of spatial relationships. Node A goes here, Node B connects to it there, the whole thing needs to look balanced and readable.

But ask an LLM to generate a canvas layout directly, and you'll get something that looks like it was designed by someone wearing a blindfold. The models are incredible at understanding relationships, dependencies, and hierarchies, but they're terrible at translating that understanding into X/Y coordinates that actually make visual sense.

This became painfully obvious during my early experiments with AI-generated templates. I'd feed GPT-4 a description like "create a data pipeline for an e-commerce analytics system," and it would dutifully generate all the right components, customer data, order processing, inventory management, reporting layers, but the spatial arrangement would be completely nonsensical. Nodes overlapping, edges crossing at bizarre angles, the whole thing looking like a bowl of spaghetti.

Using simple JSON

The breakthrough came from an unexpected direction. Months earlier, I'd built what I thought was just a basic utility feature: the ability to export entire Datadef projects as JSON files. The use case seemed straightforward, users wanted to share their work, back it up, maybe import it into different environments.

The technical implementation was relatively simple. Take everything that makes up a project, nodes, edges, documentation, glossary terms, metadata, and, serialize it into a structured format:

{

"nodes": [

{

"id": "customer_data",

"type": "data_source",

"position": {"x": 100, "y": 200},

"properties": {

"name": "Customer Database",

"description": "Core customer information...",

"schema": {...}

}

}

],

"edges": [

{

"source": "customer_data",

"target": "analytics_pipeline",

"relationship_type": "feeds_into"

}

],

"documentation": {...},

"glossary": {...}

}

What I didn't realize at the time was that I'd accidentally solved the AI geometry problem. JSON is a language that LLMs speak fluently. They can read it, understand its structure, learn patterns from it, and, most importantly, generate new, valid JSON that follows those same patterns.

Instead of asking AI to think spatially, I could ask it to think structurally. Instead of "place these nodes on a canvas," I could ask "generate a JSON representation of this data architecture." The visual layout could be handled separately, using algorithms that actually understand geometry.

The 30-Second miracle

Once this clicked, everything changed. I started feeding sample Datadef projects to GPT-5, watching it learn the patterns of how we represent different types of data architectures in JSON. Then I flipped it around: give it a natural language description, ask it to generate the JSON for a complete project.

The results were genuinely stunning. A user could type something like:

"I'm building a recommendation engine for a streaming platform. I need to track user viewing behavior, content metadata, generate embeddings for similarity matching, and feed recommendations back to the frontend."

And within seconds, they'd have a complete workspace: data ingestion nodes, processing pipelines, ML model components, API endpoints, all properly connected and documented. Not just the high-level architecture, but the detailed schemas, the relationship types, even suggested glossary terms for domain-specific concepts.

What used to take experienced data architects hours of careful planning and layout now happened in the time it takes to grab a coffee.

Beyond templates: The conversational workspace

But here's where it gets really interesting. Once you have AI that can generate complete project structures from natural language, you're not limited to just starter templates. You can have conversations with your workspace.

"Add a real-time fraud detection component to this payment processing pipeline."

"Reorganize this to follow a medallion architecture pattern."

"Generate documentation for all the nodes in the customer journey section."

Each request results in precise JSON modifications that the system can apply instantly. The workspace becomes fluid, responsive to natural language in a way that traditional GUI interactions never could be.

I've watched users iterate on complex data architectures by simply describing what they want to change, rather than clicking through dozens of property panels and relationship dialogs. It's like having a conversation with your diagram, and the diagram actually understands what you're saying.

JSON limitation and what's next

Now, let me be honest about where we are versus where we're heading. Right now, this conversational interaction is still pretty basic, it's essentially AI generating modified JSON blobs that get imported back into Datadef. It works, but it's a bit like having a brilliant architect who can only communicate through detailed blueprints rather than walking around the construction site with you.

The real future lies in proper API integration. What I'm excited about is implementing something like the Model Context Protocol (MCP) or building dedicated API endpoints that let AI interact directly with Datadef's internal systems. Instead of "generate new JSON and replace everything," we'd have granular operations like:

addNode(type, position, properties)updateDocumentation(nodeId, content)createRelationship(source, target, type)reorganizeLayout(pattern, constraints)

This isn't just cleaner architecturally, it opens up entirely new possibilities. AI could make incremental changes while preserving user customizations, maintain undo/redo history properly, and even collaborate with multiple users in real-time. The conversational workspace stops being a party trick and becomes a genuine new interface paradigm.

Think about it: instead of learning complex software interfaces, users could just talk to their tools. The JSON approach proved the concept works, but proper API integration will make it feel natural.

Datadef usage : some compound effect

What's fascinating is how this creates a compounding effect, though not in the way you might initially think. Let me be clear upfront: Datadef doesn't access user project data. Your architectures, your business logic, your sensitive information, all of that stays completely private.

But here's what's interesting: the patterns of how people structure data architectures are surprisingly consistent across industries, even when the specific content is completely different. The AI learns these structural patterns from publicly available examples, documentation, and architectural best practices, not from user data.

When a user describes building "a real-time fraud detection system for payment processing," the AI draws from its understanding of common fraud detection patterns, typical payment processing flows, and industry-standard architectures.

The result is still remarkably contextual. A user working on healthcare data pipelines gets suggestions that naturally incorporate HIPAA compliance patterns, proper audit logging, and the data segregation approaches common in that space. Someone building financial analytics systems gets architectures that reflect standard risk management frameworks and regulatory reporting structures.

The AI doesn't just generate generic template, it generates contextually appropriate architectures that feel like they were designed by someone who actually understands your problem space, while keeping your actual implementation details completely private.

Accidental innovation : from a boring feature to exciting opportunities

This whole experience has shifted how I think about the relationship between AI and creative tools. We often imagine AI as this separate assistant that we consult for specific tasks. But what I'm seeing with Datadef is something more integrated: AI as a medium for creative expression, not just a helper.

When you can describe complex systems in natural language and have them materialize as structured, visual representations, you're operating at a different level of abstraction. You're thinking about the what and why of your architecture, and letting AI handle the how of representing it.

It's the difference between being a painter who has to mix their own pigments and one who can focus entirely on the artistic vision because all the tools just work.

The most important lesson here might be about how innovation actually happens in software. The features that transform user experiences aren't always the ones you plan for. Sometimes they emerge from the intersection of two seemingly unrelated decisions: choosing JSON as a serialization format, and experimenting with AI integration.

That boring export feature? It wasn't just about portability. It was accidentally creating a universal language that both humans and AI could speak fluently. And that changes everything.

Six months ago, I thought I was building a better diagramming tool. Today, I realize I've stumbled into something much more interesting: a new way of thinking about the relationship between human creativity and machine intelligence. And it all started with a simple JSON export button.

I will finish with a personal quote :

Sometimes the most powerful architectures are the ones you never meant to design .